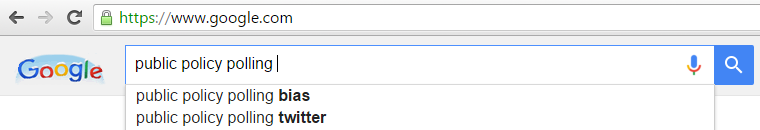

Is Public Policy Polling a Reliable Source? No.

By MATTHIAS SHAPIRO

If you’re on the phone with Public Policy Polling, just hang up.

Because you deserve better than to have your time wasted by a polling firm that crowd-sources polling questions on Twitter from biased activists and has demonstrated little commitment to honest data.

Public Policy Polling tweeted last Friday that Republicans are so dumb and vicious that they want to bomb places even if they don’t know what they are.

30% of Republican primary voters nationally say they support bombing Agrabah. Agrabah is the country from Aladdin. #NotTheOnion

— PublicPolicyPolling (@ppppolls) December 18, 2015

They followed up with the actual document on the GOP results and (a few thousand retweets later) released the Democrat results.

My biggest frustration here is that this question was obviously added because it plays well with a clickbait economy that thrives on confirmation bias. It’s the Gawker-ization of what should be accurate and informative media. Ploys for clicks and clicks and more clicks. Hate clicks. Stupid clicks, smart clicks. It’s all about getting clicks at whatever cost. I feel very strongly that serious, professional organizations need to eschew clickbait and I get upset when they exploit it. I despise it so much, I try hard not to engage with or link to clickbait (although maybe I should start using DoNotLink). My compromise with myself is to link only to the actual PDF.

Dan McLaughlin, who is an excellent and honest poll watcher, noted that Public Policy Polling actually admitted to crowdsourcing this question from a far left blogger as a way of trolling their own respondents. While Salon columnist Amanda Marcotte may be on the reading list for the PPP team, she is not a great inspiration for accurate, objective data and it is certainly inappropriate to take her suggested “gotcha” questions and integrate them into actual polling practice.

McLaughlin’s reflection on this poll is really good, but the core of his argument is that this should teach us to be skeptical of all issue polling.

“But really, you should not take these troll polls seriously, and in fact they should teach educated poll consumers to be skeptical about all issue polling. Why did 55% of Democrats and 43% of Republicans answer a question about bombing a fictional country? Partly, one assumes, because it didn’t occur to them that the pollster would take advantage of them by asking a question that assumed facts that do not exist. Partly because people in general do not like to admit there are things they do not know. Partly because people do assume there are all sorts of little countries out there they have never heard of, a fair number of which (e.g., former Soviet republics, parts of the old Yugoslavia, breakaway African states) didn’t exist 20 or 30 years ago, and that some of these are unstable places that may house the occasional wretched hive of scum and villainy. And partly because answers to issue polling questions tend to vary a lot by what the pollster says before asking them.”

McLaughlin also noted that the GOP respondents were asked this question after they were asked eight other questions about terrorism, while Democrat respondents were asked this question, but not any of the preceding questions.

This is the functional equivalent of my asking someone eight questions about genetics and genetics policy, then asking, “Should we prosecute GAC (Gattaca Aerospace Corporation) for their use of genetic profiling in rejecting applicants?” … and then going on Twitter to make fun of how my respondents want to prosecute a fictional company from the movie “Gattaca.”

Although we should probably admit that the Ethan Hawke character is totally guilty of a terrible fraud in “Gattaca,” endangering the lives of this co-workers in order to fulfill his childhood dream of playing astronaut.

Although we should probably admit that the Ethan Hawke character is totally guilty of a terrible fraud in “Gattaca,” endangering the lives of this co-workers in order to fulfill his childhood dream of playing astronaut.

By adding this question, Public Policy Polling is admitting that they don’t take their work seriously, they don’t take their polls seriously, and they don’t take data seriously.

Some people believe more data is always a good thing. But there is a big difference between a poll or a survey that is conducted with integrity and serious thought and one that includes “silly” questions. “Silly” is how Public Policy Polling described their own question. “Silly” is their word, not mine. They stated plainly that they did this in order to “see if people would reflexively support bombing something that sounded vaguely Middle Eastern.”

The problem is that this poll question doesn’t do that. In fact, it doesn’t tell us anything about anyone. What it does is give us a stupid question against which we can overlay our own biases. Using this poll, we could conjecture that

- more Democrats than Republicans think Agrabah is a real city

- Martin O’Malley supporters are the dumbest and also the most bloodthirsty of the Democrat party, polling so high on pro-bombing they give Donald Trump supporters a run for their money

- Ben Carson supporters are the peaceniks

But these conjectures would be nothing more than us looking at bad data and trying our hardest to draw the conclusions we want out of them. This question is a Rorschach test for us to take what we want to believe, assign a data point to it, and then preen in our own self-satisfaction that our bias about the “other team” has been confirmed.

I hated that Democrats used this to confirm their biases about Republicans and then I hated that Republicans turned around and used the SAME BAD DATA when it confirmed their biases about Democrats. Digging through bad data and discovering that, hidden in the crosstabs, there is something that we can use to hit back at the “other team” does not suddenly make the data valid.

This poll does not mean that Democrats are smug and stupid and more likely to have opinions about fake cities than Republicans. And it doesn’t mean that Republicans want to bomb everything. This data is meaningless. It is a troll, a fake question with fake responses upon which we can overlay our biases.

This nonsensical poll is exactly what a responsible pollster tries to avoid. This is why we learn how to construct honest studies, why psychology is important in polling. But Public Policy Polling took a question designed to play to a specific activist’s confirmation bias and made it a part of their poll. The subsequent tweet took it even further, encouraging people to revel in bias, divorced from context, before they could even read the full poll. The whole exercise played out in a way that was meant, from start to finish, to tell the story they hoped it would tell. They traded professionalism for clicks, sold their integrity for a few thousand retweets.

And then … they mocked people who disagreed with them on Twitter. The entire episode was revolting to anyone who thinks media and polling are important, serious enterprises.

Public Policy Polling doesn’t care about integrity when it comes to data. Don’t engage with them … and don’t take their polls seriously.

They definitely don’t.

Clicky-clicky-click-click-click.

Matthias Shapiro is a software engineer, data vis designer, genetics data hobbiest, and technical educator based in Seattle. He tweets under @politicalmath, where he is occasionally right about some things.