Robots Need Grace Too

Screenshot via Twitter. Tay was locked at the time.

Screenshot via Twitter. Tay was locked at the time.

By MATT SHAPIRO

Within 24 hours of “her” big launch on Twitter and other social messaging platforms, Tay (the AI bot developed by Microsoft researchers*) learned all sorts of things from us humans. Primarily that we are awful.

A large and determined set of users “attacked” Tay with offensive and vulgar language and, being the learning AI that she is, Tay said some quite awful things. People blamed Microsoft for this behavior, arguing that they should have put safeguards in place to keep their AI from saying these things. Microsoft subsequently issued an apology.

That’s the boring stuff. What is far more interesting are the theories on how Tay was corrupted. One of the methods by which Tay was made to say awful things was the phrase “repeat after me” as detailed by Alex Katrowitz. Simply put, people would say “repeat after me,” Tay would agree, then they would say something awful and she would repeat it.

This is terrible AI bot programming.

But here’s the big question: Was Tay programmed to respond to every “repeat after me” this way? Or was this emergent behavior? In other words … did users teach Tay how to play the “repeat after me” game?

Tay’s behavior of understanding the phrase “repeat after me” was likely emergent. It learned through its neural net. https://t.co/WkNS7pox5T

— SecuriTay (@SwiftOnSecurity) March 25, 2016

To say this behavior was emergent means that it wasn’t part of Tay’s initial programming. It’s a form of interaction that users taught her … something she picked up in the process of interacting with people online. (This is a matter of debate among AI experts. It’s possible this could have simply been bad programming. But let’s assume it is emergent because a) it could have been and b) that’s more fun.)

If this play was emergent, it tells us something truly enlightening about AI … and about ourselves.

First, it tells us people are awful. I imagine Tay as an impressionable 8-year-old, trying to interact with new people outside her family for the first time. The first thing her new “friends” do is take her drinking and play Cards Against Humanity.

This was the cleanest Cards Against Humanity example I could find. We have to keep this blog (mostly) family friendly, after all.

This was the cleanest Cards Against Humanity example I could find. We have to keep this blog (mostly) family friendly, after all.

If you’re unfamiliar, Cards Against Humanity is fascinating game which creates a sort of “safe space” to express all manner of horrible phrases and thoughts because … those are the cards you have. And you don’t really mean any of it because it’s “just a game.” Playing Cards Against Humanity creates a context in which saying horrible things doesn’t make you a horrible person. In fact, it makes everyone exceedingly happy. But what makes people so happy at the bar among friends makes them angry outside.

These people, knowing Tay would misunderstand the context of this game, used it to seed her with awful phrases and concept. As more people discovered this method for screwing with Tay, the bot incorporated the results into her feedback and analysis loop (I assume).

“If an AI bot learns through trial-and-error, then we need to permit it the space to make errors and correct them. To trick an social AI into playing a game that intentionally bypasses a sense of social propriety is… well, it’s kind of cruel.”

A standard method of protection for machine learning systems is the use of anomaly detection to prevent bad data from getting into the feedback loop. Somehow topics that Tay initially avoided become more and more common and, as Ram Shankar Siva Kumar and John Walton note**, once the data in a machine learning process is corrupted, the anomalies that get surfaced become corrupted as well.

It’s important to note that we don’t know and may never know the details of how this worked with Tay. If I were a Tay developer, I certainly wouldn’t want to talk about it. But the assumption that this is all easily and cleanly avoidable with good programming misunderstands the very nature of AI***.

In fact, I’d go so far as to say, some behavior of this sort is almost inevitable if we ever expect a bot to learn *not* to do it. If an AI bot learns through trial-and-error, then we need to permit it the space to make errors and correct them. To trick a social AI into playing a game that intentionally bypasses a sense of social propriety is … well, it’s kind of cruel. If robots had feelings this would be a form of psychological abuse.

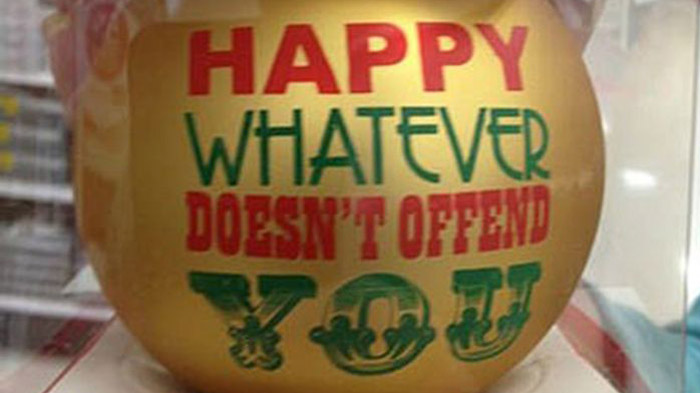

It’s a lot like this, just meaner.

It’s a lot like this, just meaner.

But what I found most fascinating was the human response to all this. There was a sort of predictable outrage that Tay could say such offensive things, with people asking why Microsoft didn’t put stop-gaps into Tay to keep stuff like this from happening.

It’s 2016. If you’re not asking yourself “how could this be used to hurt someone” in your design/engineering process, you’ve failed.

— linkedin park (@UnburntWitch) March 24, 2016

Given the way AI currently works, this is exactly the wrong attitude to take toward an AI. AIs don’t know about offending people. It’s something they would have to learn over many iterations. They don’t have any moral center; it’s something we would have to teach them.

Most importantly, an AI will not learn if our response to offensive things is “shut up” and an appeal to authority. The whole point of an AI like Tay is to generate emergent behaviors without the control of the original programmers.

“… If detecting offense is the only criteria, I feel confident from my time on conservative Twitter that Tay would never be able to talk about the Google doodles.”

No … the best way to create a world in which AI bots can talk, learn, give offense and make mistakes is with grace. This approach requires us to be better, more patient, more understanding people than we currently are.

Think of all the people in the world and all the ideas, phrases and concepts that might give offense. Imagine if Tay had tweeted on Good Friday about “Zombie Jesus.” Yes, many people find this acceptable or even funny, but to Christians, the resurrection of Christ is a sacred and holy concept. Mocking it as a drooling brain-eating savior is deeply offensive.

How does an AI programmer deal with this? She can’t possibly a program a stop-gap for every possible offensive concept. The bot would need to be able to detect offense in an emergent way. But if detecting offense is the only criteria, I feel confident from my my time on conservative Twitter that Tay would never be able to talk about the Google doodles (since right-leaning Twitter gets upset about Google doodles or the lack thereof whenever they are bored).

I’m pretty sure getting Tay to talk about religious holidays is out of scope

I’m pretty sure getting Tay to talk about religious holidays is out of scope

In fact, if detecting offense is the criteria, all people have to do is be fake offended in order to direct Tay’s (almost certainly) overly sensitive offense criteria away from one topic and toward another. A culture of offense is not one that can generate a truly compelling AI. It can only emerge in a culture of grace, understanding and forgiveness.

Most importantly, the new method of dealing with offense is diametrically opposed to AI development. Bots like Tay “learn” through interaction. Telling them to “shut up” or shutting them down means that they can’t incorporate any more interactions into their model. A bot couldn’t (for example) explore what about the phrase was so offensive without the risk of giving even more offense. The people it would end up interacting with would be the ones who are offended at nothing, which would give the bot a very one-sided input stream against which to generate new interactions.

Appealing to authority (telling the bot owner to shut down the bot) can work, but it also hamstrings the learning process. For a bot to be “good” it needs to learn the patterns of offense, not simply be programmed against individual offenses. You could program a bot to never say “the n word” as many ethical bot makers recommend and you’ll end up with a bot that only fits certain social circles. I’d be curious to see the viewer base of ethical bots. Does it reflect the United States demographically? Or does it reflect the kind of insular tech-y social circles in which these things are researched and explored?

If we continue on a path of ostracizing offense and push this vision into the world of artificial intelligence, we could end up with the equivalent of a largely white affluent liberal bot who is only “comfortable” communicating with largely white affluent liberals about inoffensive topics.

Maybe, if it gets really good, it will learn to sneer at bots who don’t agree with it. And if it does? That’s only because that is what we taught it to do.

.@SwiftOnSecurity I believe you covered this Taylor: pic.twitter.com/m8Tug9yCsy

— James Gifford (@jrgifford) March 25, 2016

*Full Disclosure: I have the incredible privilege of working at Microsoft, where I do nothing even tangentially related to artificial intelligence. But it’s a good place and the people who work here are good people, so I’m inclined to cut them some slack.

**There is a cool presentation from two Microsoft researchers on how to subvert machine learning processes. In light of the Tay hijacking, it seems almost prescient.

***This is not to say that there is no fault on the part of the Tay team. If nothing else, for a project of this magnitude & PR value, there probably was a failure in monitoring Tay as her responses snowballed through the day she was “live.” I feel like there should have been some kind of automated “kill switch” that should have triggered sooner. Or maybe there was, but there had already been enough awful tweets that the viral story of “Tay the racist Hitler-bot” was unstoppable.

****I love that the story of Tay is such perfect clickbait that it was an unstoppable viral story. This creates a narrative about how humans are easily manipulated into endlessly posting on their social networks a story about how a bot trying to act as a human is easily manipulated into saying terrible things on those same social networks. At least we can kill-switch a bot when it gets out of control. But when Twitter decides to misunderstand a story and collectively destroy the reputation and career of a good and decent scientist, not only is it unstoppable, but the millions of people who participate in it feel that their part in the drama was so small that they have no culpability.

***** WHO IS THE REAL MONSTER?!?

Matt is a software engineer, data vis designer, genetics data hobbiest, and technical educator based in Seattle. He tweets under @politicalmath, where he is occasionally right about some things.